EWY: The Memory Tax on Every AI Chip

Every Nvidia GPU that powers AI runs on a specific type of memory called HBM (High Bandwidth Memory). Not regular RAM. Not storage. A specialized, extremely fast memory that sits directly on the chip and feeds it data fast enough to keep up with the compute.

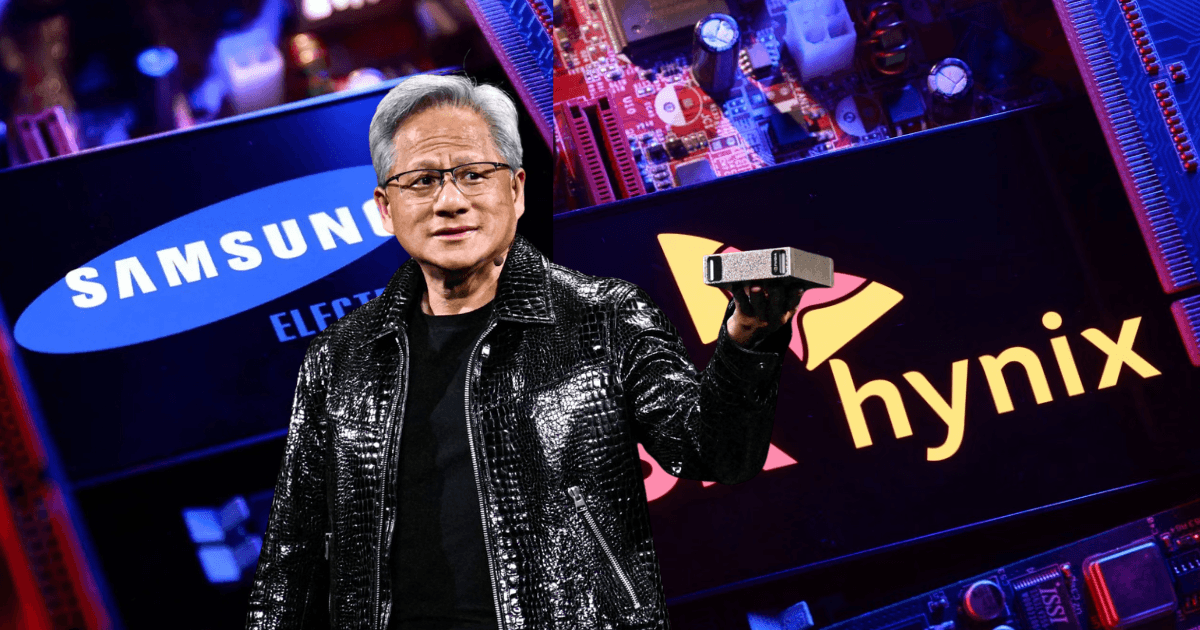

There is one company that dominates its production. SK Hynix, a South Korean chipmaker. They hold 62% of the global HBM market and supply the majority of Nvidia's most powerful chips. Every Blackwell GPU shipped in 2025 ran on their memory. Every Vera Rubin GPU shipping in 2026 will need even more of it: 288GB per chip versus 192GB on Blackwell.

Subscribe to Hardwired to keep reading

The author made this story available to subscribers only. Subscribe to get access, it's free.

Unsubscribe anytime